AI Behavioral Control.

On-Premise. Real-Time.

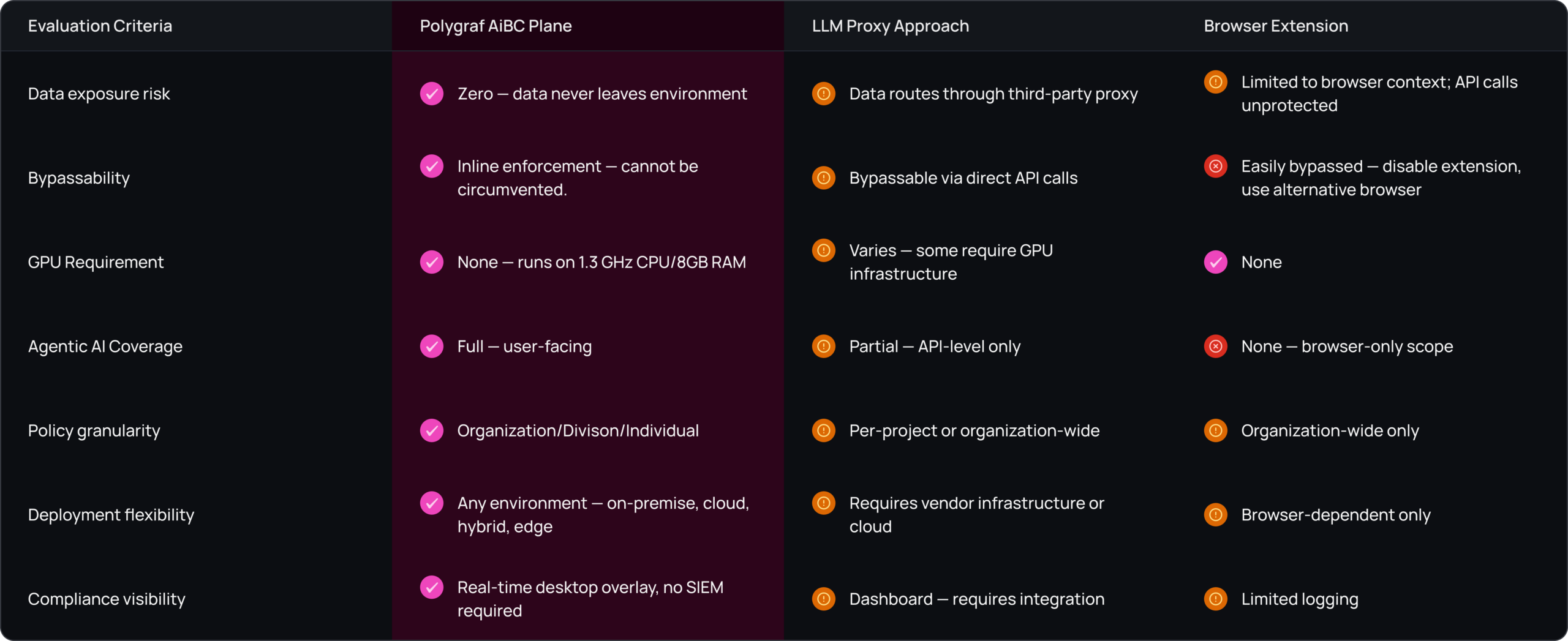

Enterprise AI creates uncontrolled data exposure — Polygraf's AiBC Plane enforces organizational AI policy inline, in real-time, without any data leaving your environment.

Security Standards You Can Trust.

Polygraf Deploys as a Container on

On-Premises

(Air-Gapped)

Kubernetes, Docker

- Container Ready. Available via API

Private Cloud

VMware, OpenStack

- Container Ready. Available via API

Azure

AKS, Container Apps

- Container Ready. Available via API

Google Cloud

GKE, Cloud Run

- Container Ready. Available via API

AWS

EKS, ECS, Lambda

- Container Ready. Available via API

Edge Devices

NVIDIA, Intel

- Container Ready. Available via API

<1 hour

Average deployment time

1.3Ghz & 8GB RAM

Compute requirements

Zero

Changes to existing workflows

Numbers that matter

<100 ms

enforcement latency

40-130MB

RAM footprint

1.3GHz/8GB

minimum CPU/RAM

100%

input+output coverage

0

third-party data exposure

Our Customers & Partners.

Try Secure LLM - Your AI Privacy Engine.

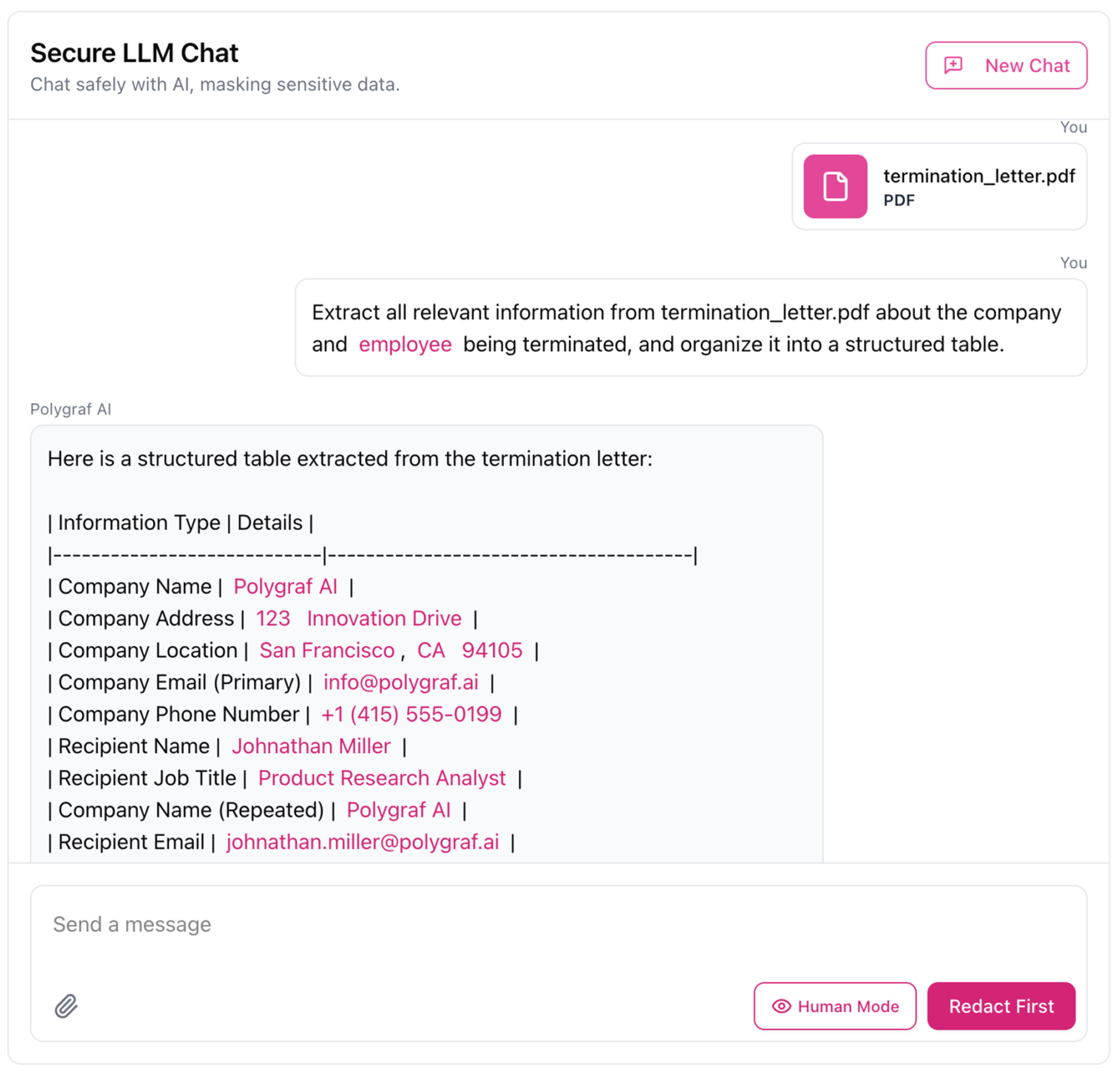

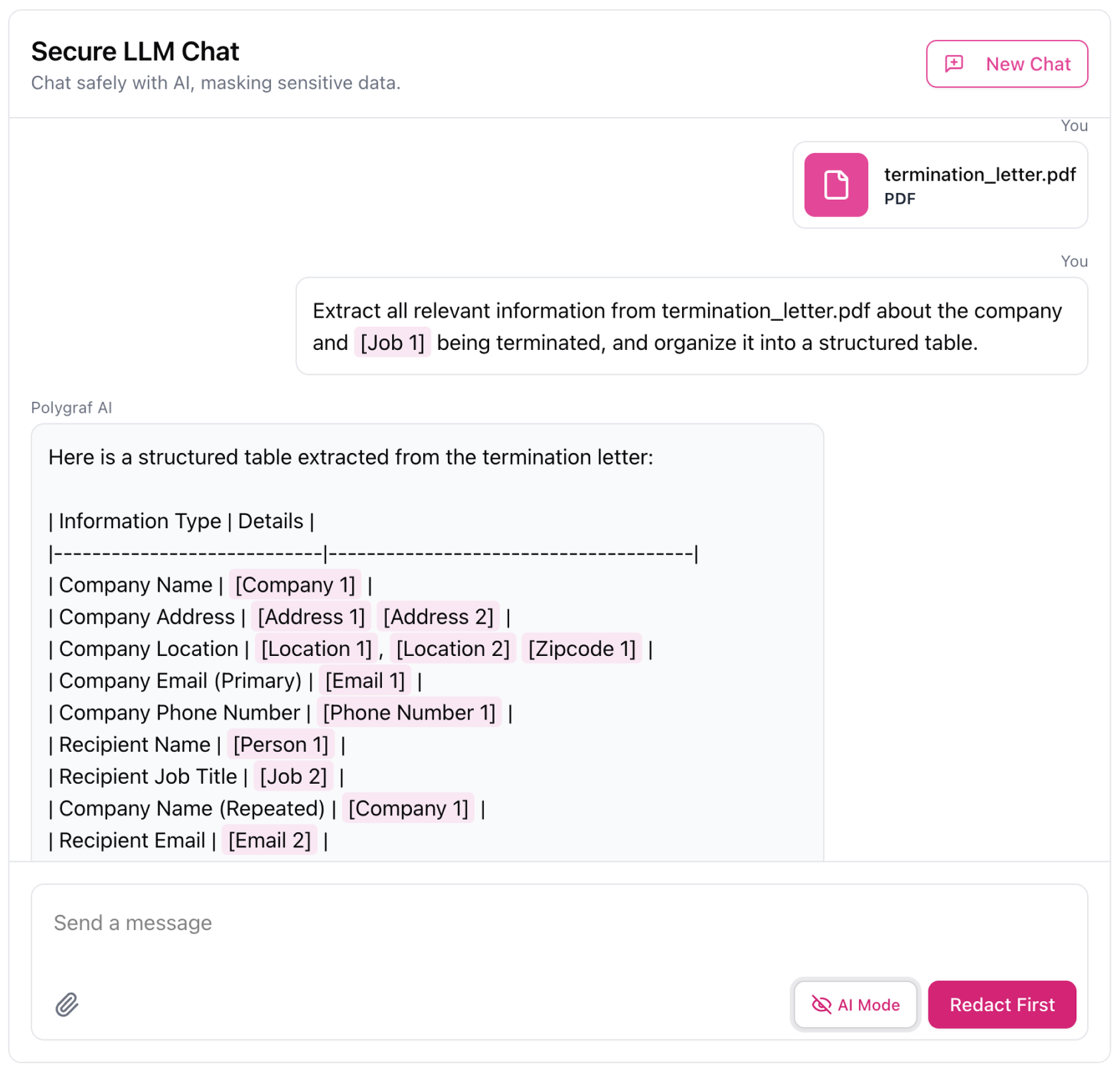

Polygraf’s Secure LLM protects your privacy by automatically removing personal and confidential data before it’s shared with ChatGPT, Claude, or any other external model - then safely restoring it after processing the response.

Input & Output Risk Controls.

Real-time protection for every LLM call.

Input Protection

Secure

Processing

Output Validation

Input Controls

Protect your AI systems from malicious inputs, sensitive data leaks, and policy violations.

Invisible Text Detector

Detects and removes hidden or invisible characters that might be present within the user’s input.

Anonymizer

Detects and removes or masks Personally Identifiable Information (PII) from user input prompts, safeguarding user privacy.

Code Ban

Competitor Ban

Filters out any mentions of competitor names within the user’s input.

Substring Control

Sentiment Analysis

Analyzes the emotional tone or sentiment expressed in the user’s input prompt.

Prompt Injection Detector

Specifically detects and prevents crafty input manipulations that target large language models, known as prompt injection attacks.

Token Limit

Ensures that user input prompts do not exceed a predetermined token count.

Output Controls

Ensure AI outputs meet quality, compliance, and safety standards before reaching users.

Topics Control

Filters out LLM-generated responses that touch upon specific prohibited subjects.

Bias Control

De-anonymizer

JSON Validator

No Refusal Enforcement

Toxicity Filter

Detects and flags any offensive, harmful, or abusive language that might be present in the LLM’s generated responses.

Copyright Checker

Reviews your input prompt against copyrighted sources to detect potential infringement before the text is even generated.

URL Reachability / Validator

Checks whether any URLs that are present in the LLM’s generated response are valid and can be successfully accessed.

Proven in Real-world AI Security Deployments.

Learn how industry leaders secure their AI operations with Polygraf.

Polygraf AI Secure Printing & Scanning Solution with Epson

Polygraf AI, an expert in AI-driven data governance, partnered with Seiko Epson Corporation to build a secure printing and scanning solution that automates data redaction, ensures regulatory compliance, and reduces risks at endpoint devices like printers and scanners enterprise.

Polygraf AI Secure Printing & Scanning Solution with Epson

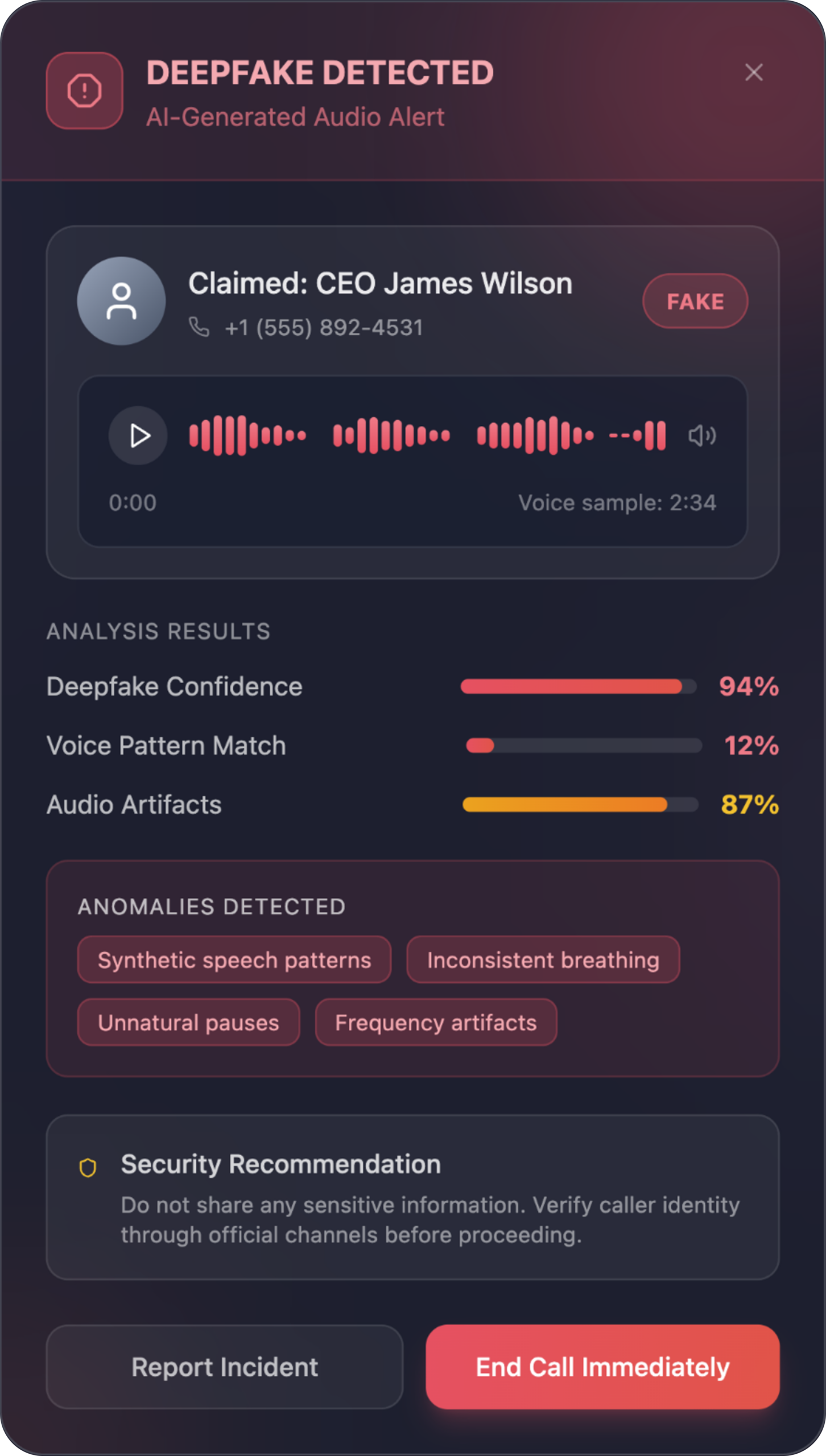

Countering AI and Deepfake Smishing and Vishing Threats

Polygraf’s proprietary multilayer AI engine has been independently validated as the most accurate globally for detecting AI-generated and manipulated content, and it emphasizes that beyond accuracy, minimizing false positives is equally critical to prevent harmful misclassification and maintain trust.

Countering AI and Deepfake Smishing and Vishing Threats

Polygraf Bluebird AI Humanizer Case Study

Bluebird Sales helps cutting-edge tech companies reach the right engineering audience through high-value outbound campaigns, but as email filters become smarter at detecting and blocking AI-written messages, even highly relevant outreach can end up flagged, filtered, or lost in spam traps.

Polygraf Bluebird AI Humanizer Case Study

US County Government- AI Governance Solution

County partnered with Polygraf AI to implement a comprehensive data governance solution, delivering AI-driven governance, secure printing and scanning, and content validation tools that strengthen data security, improve regulatory compliance, and reduce risks across enterprise systems and document workflows.

US County Government- AI Governance Solution

End-to-End AI Data Protection.

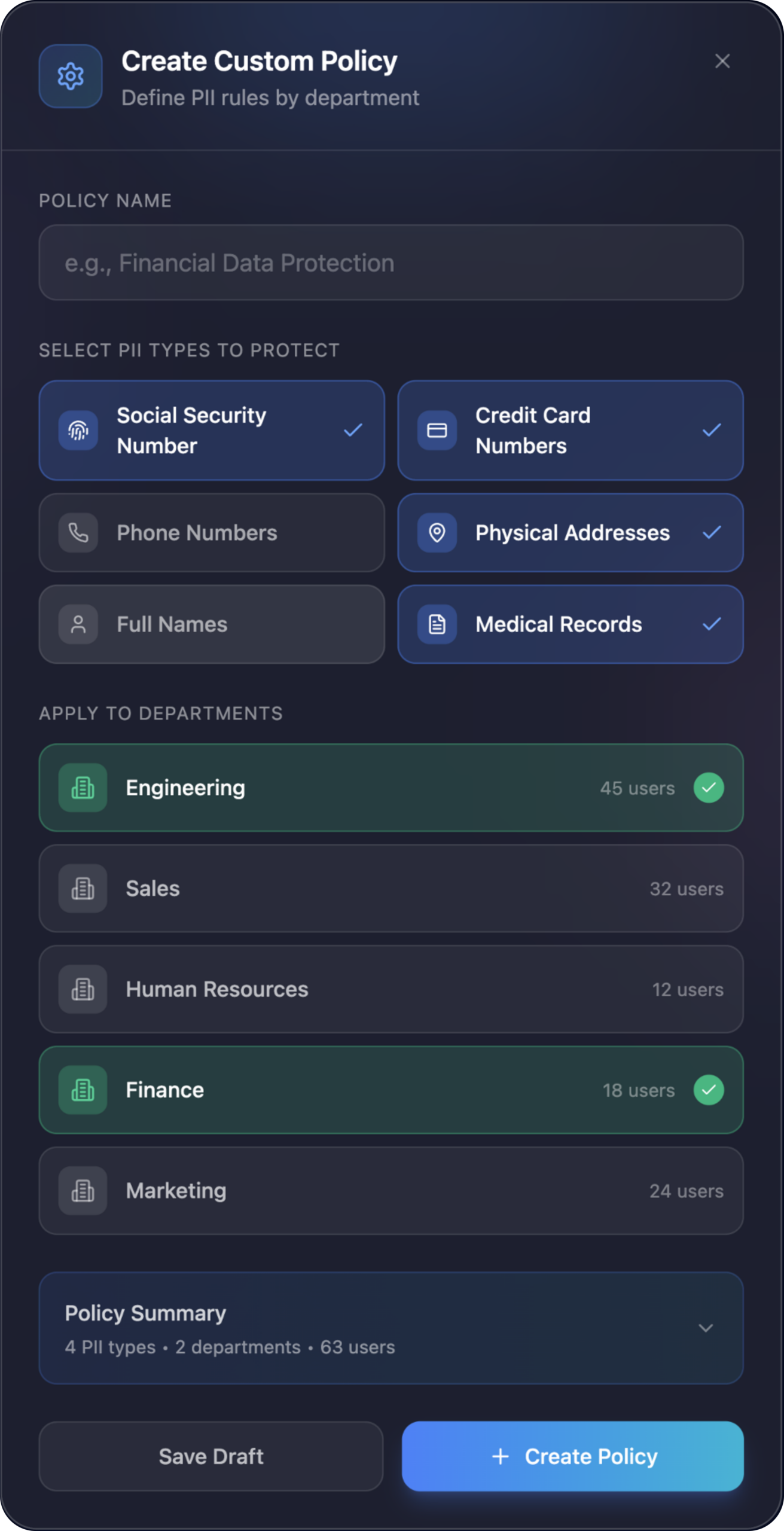

Build Fine-Grained Data Policies for Every Team

Define exactly what each department can and can’t share. Choose the data types to protect, assign rules to teams, and apply custom restrictions across AI tools, email, Slack, and more - all from one place.

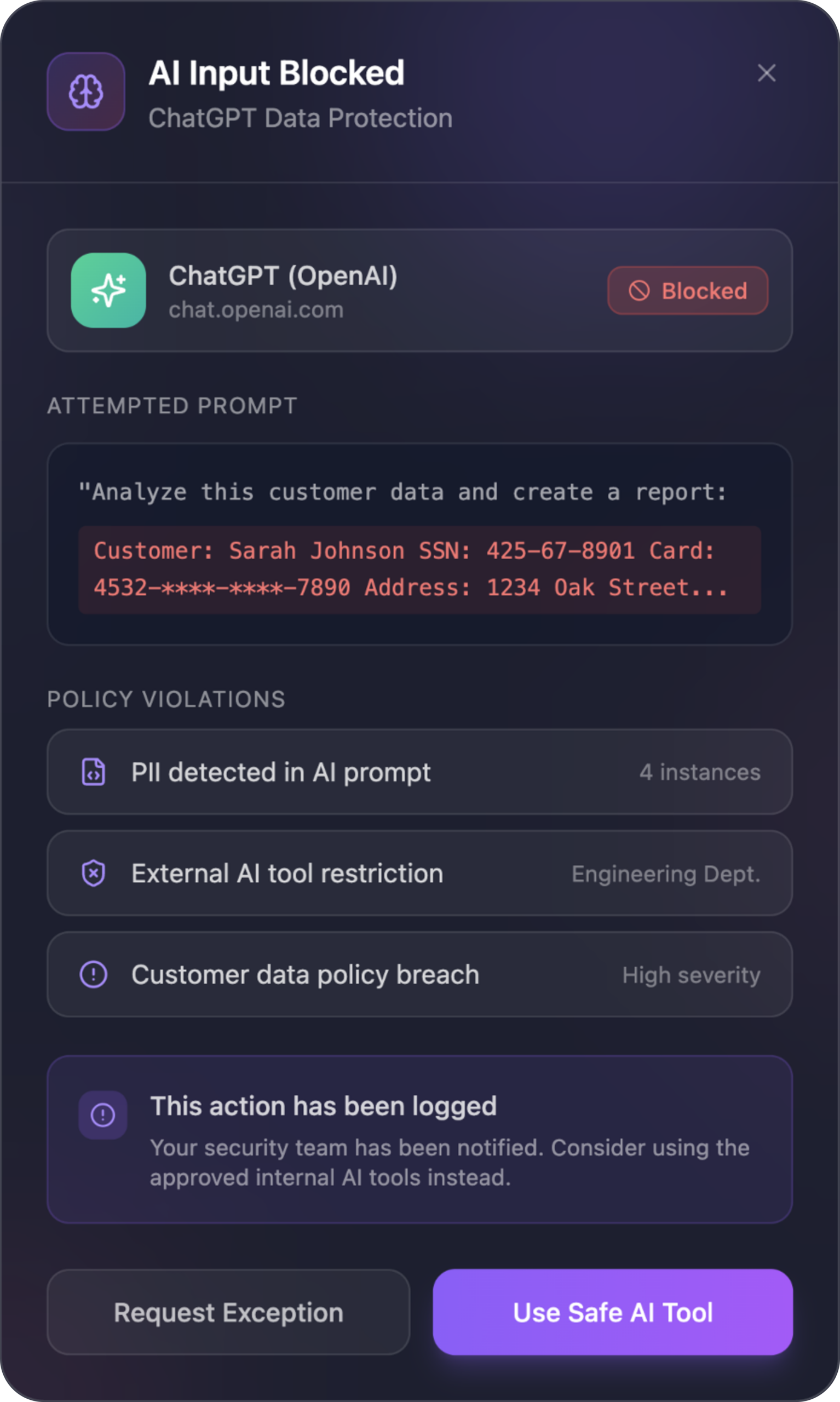

Stop PII & Confidential Data From Reaching any LLM

Polygraf analyzes AI prompts in real time and blocks any prompt that contains PII, customer data, secrets, or regulated content. Users get safe alternatives while security gets full visibility into violations.

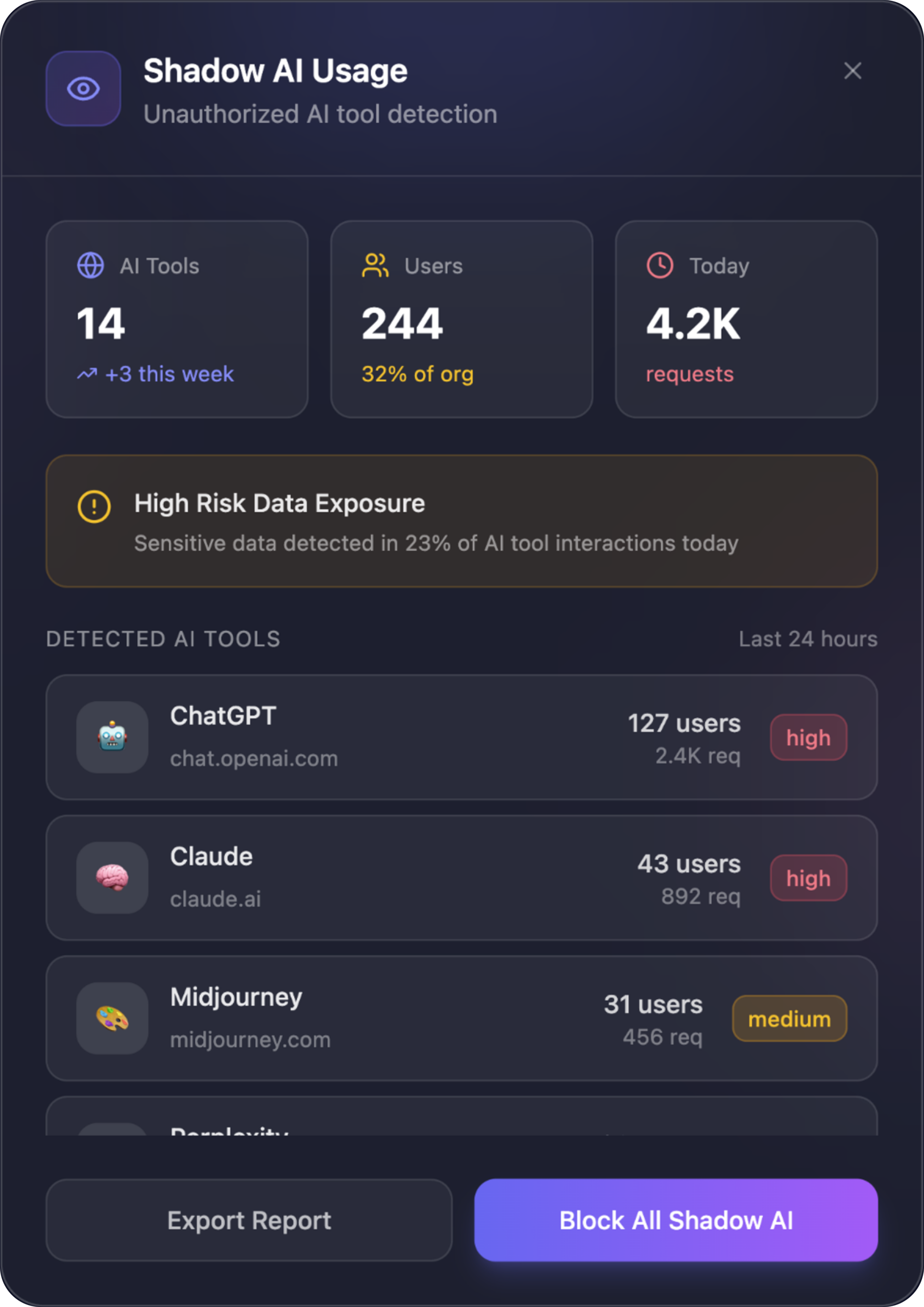

Discover Unauthorized AI Usage Across Your Company

Polygraf reveals every AI tool employees use - approved or not. Track volumes, identify high-risk interactions, and automatically block untrusted AI tools to eliminate Shadow AI across the organization.

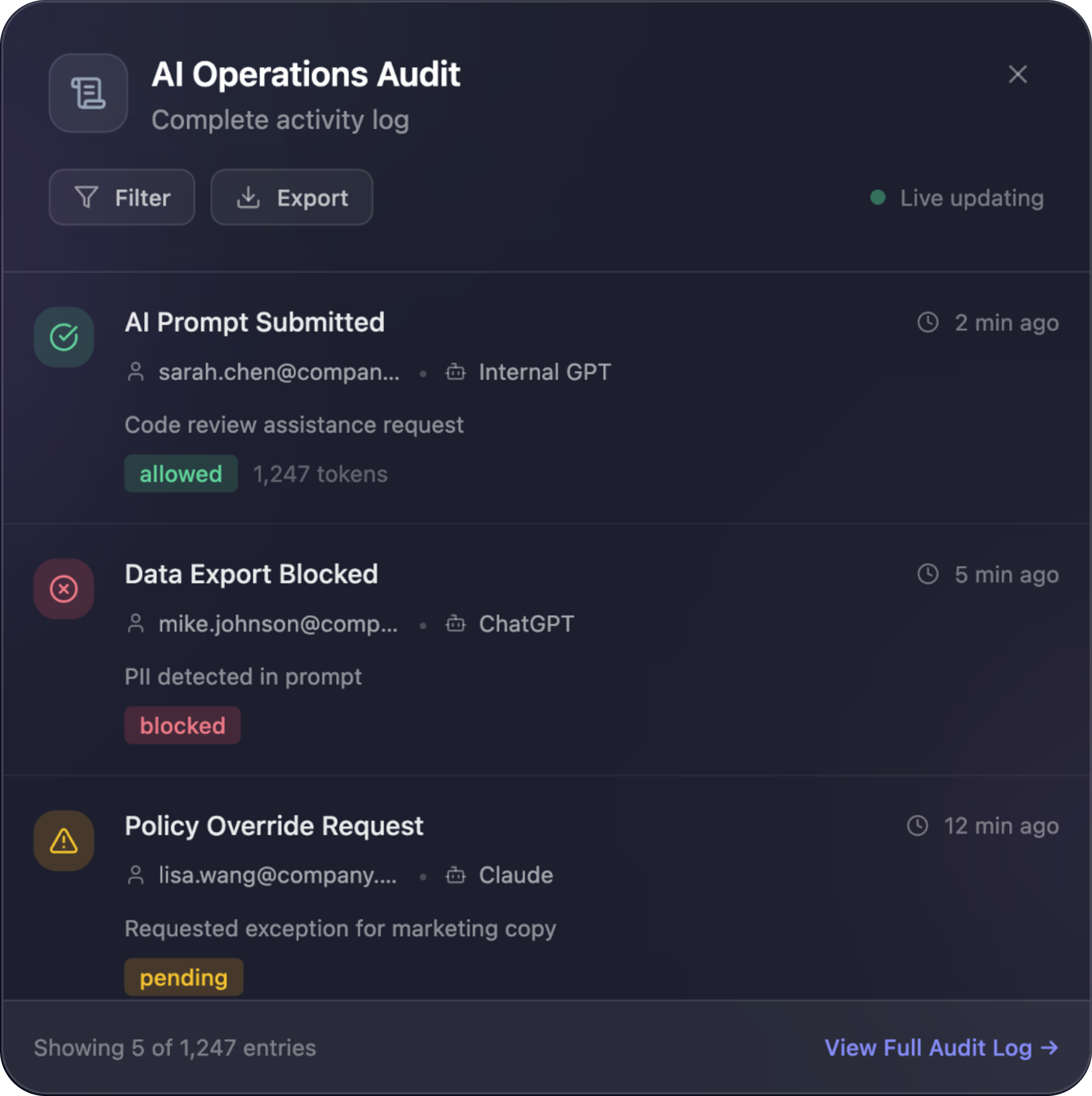

Full Audit Log of Every AI Interaction

Every prompt, every response, every block, every override - all logged with timestamps, users, policies triggered, and risk levels. Exportable, compliant, and built for SOC 2, GDPR, HIPAA, and internal audits.

Instant Detection of Deepfake of Audio

Polygraf identifies synthetic voices in real time - spotting voice pattern mismatches, unnatural pauses, and frequency artifacts. Stop impersonation scams and verify identities before damage is done.

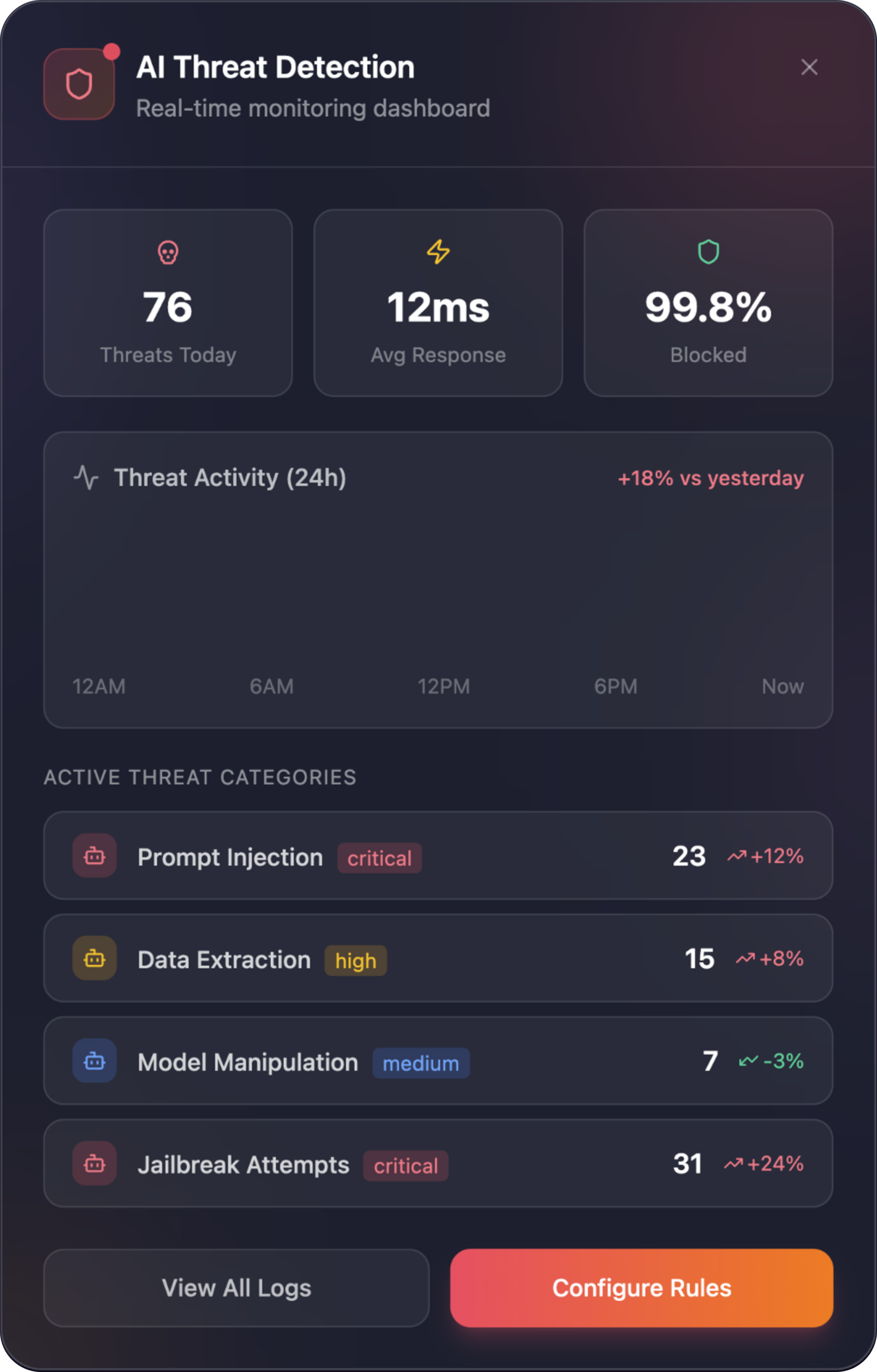

Complete Real-Time AI Threat Monitoring

A live dashboard that surfaces prompt injection attempts, data extraction risks, jailbreak attempts, and model manipulation. See threats in real time, measure response times, and instantly adjust policies.

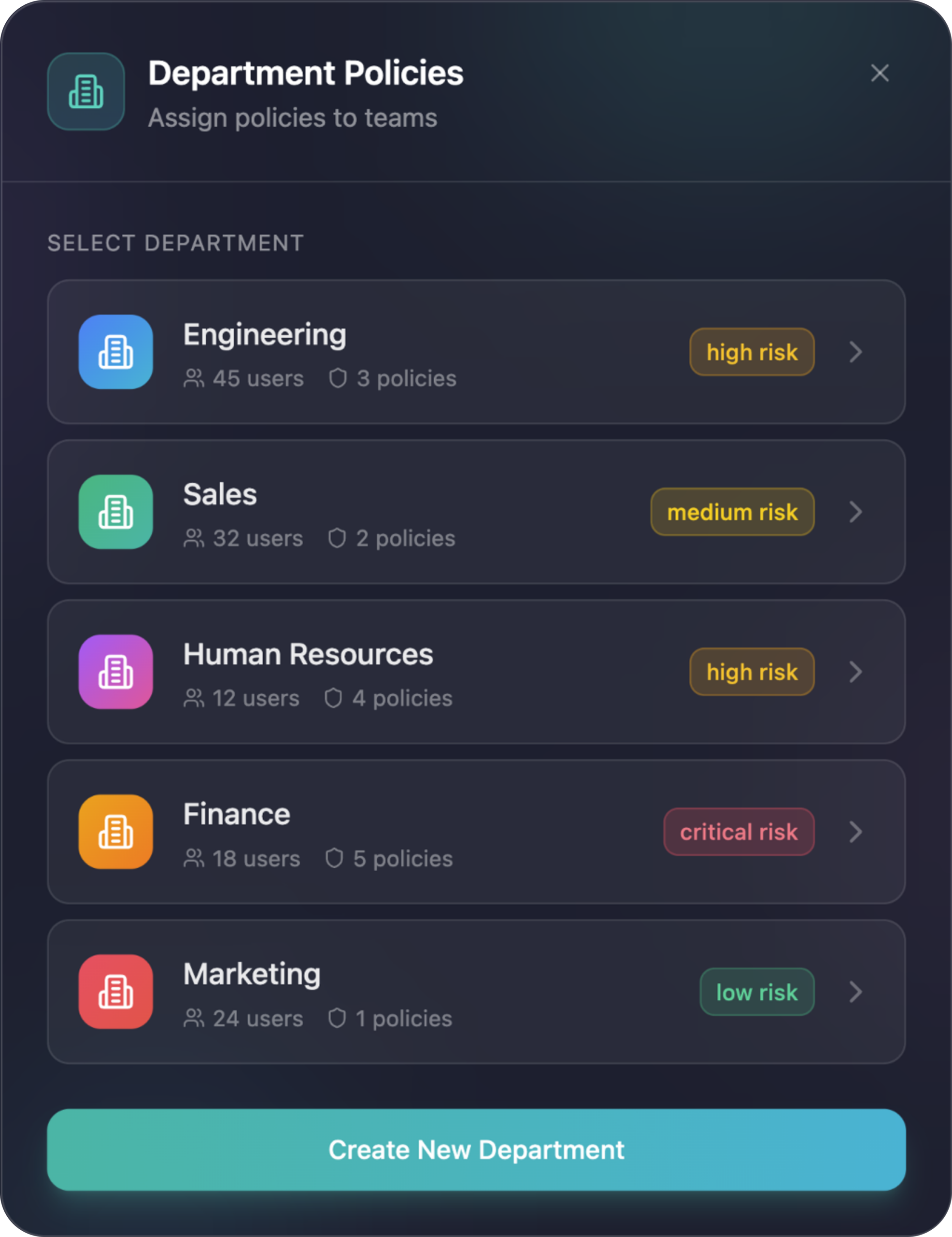

Assign Tailored AI & Data Policies by Department

Apply different rule sets to Engineering, Sales, HR, Finance, and Marketing with a single click. Reduce risk by giving each team the exact level of AI access they need - nothing more.

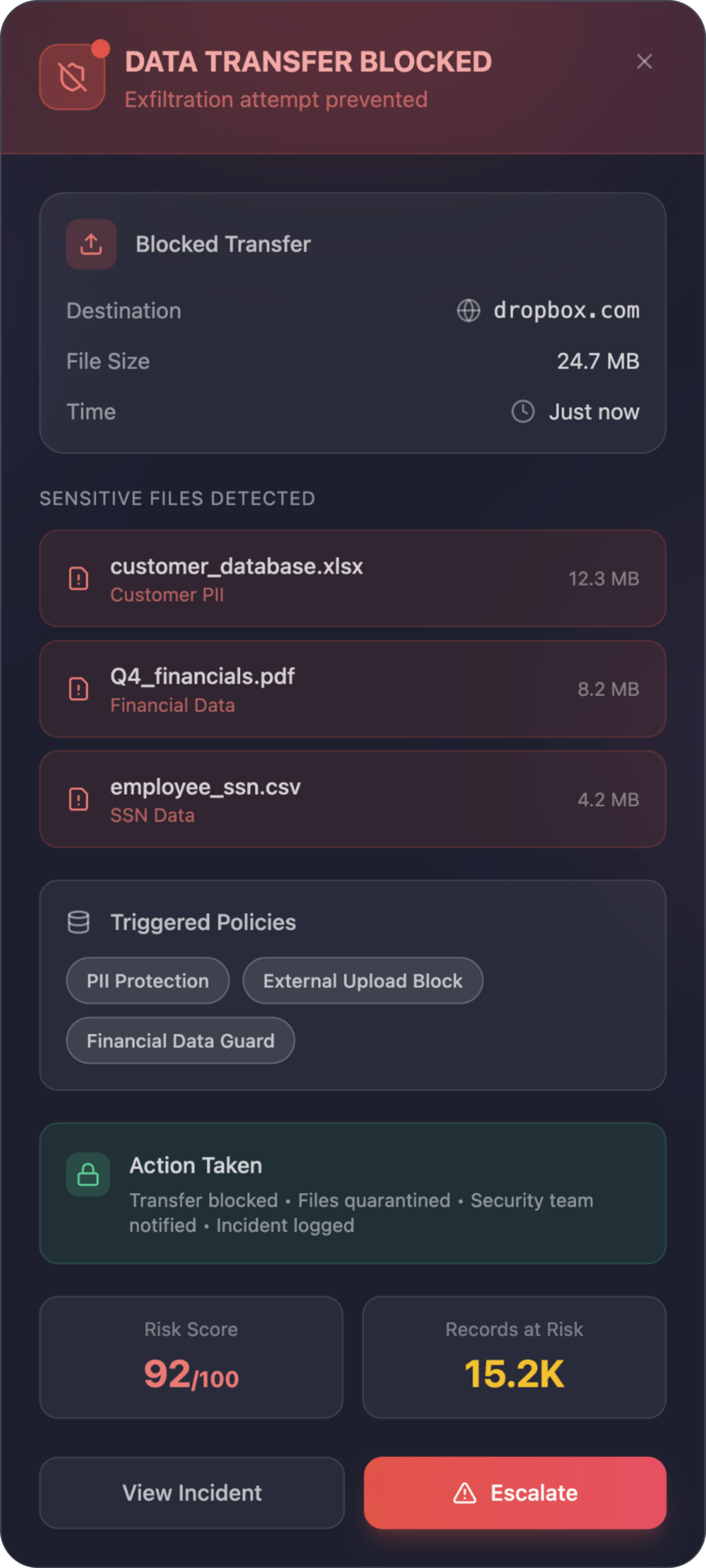

Block Unauthorized File Transfers Instantly

Polygraf detects sensitive data inside files and stops risky uploads to external apps (Dropbox, Google Drive, personal email, etc.). Files are quarantined, security is notified, and every action is logged.

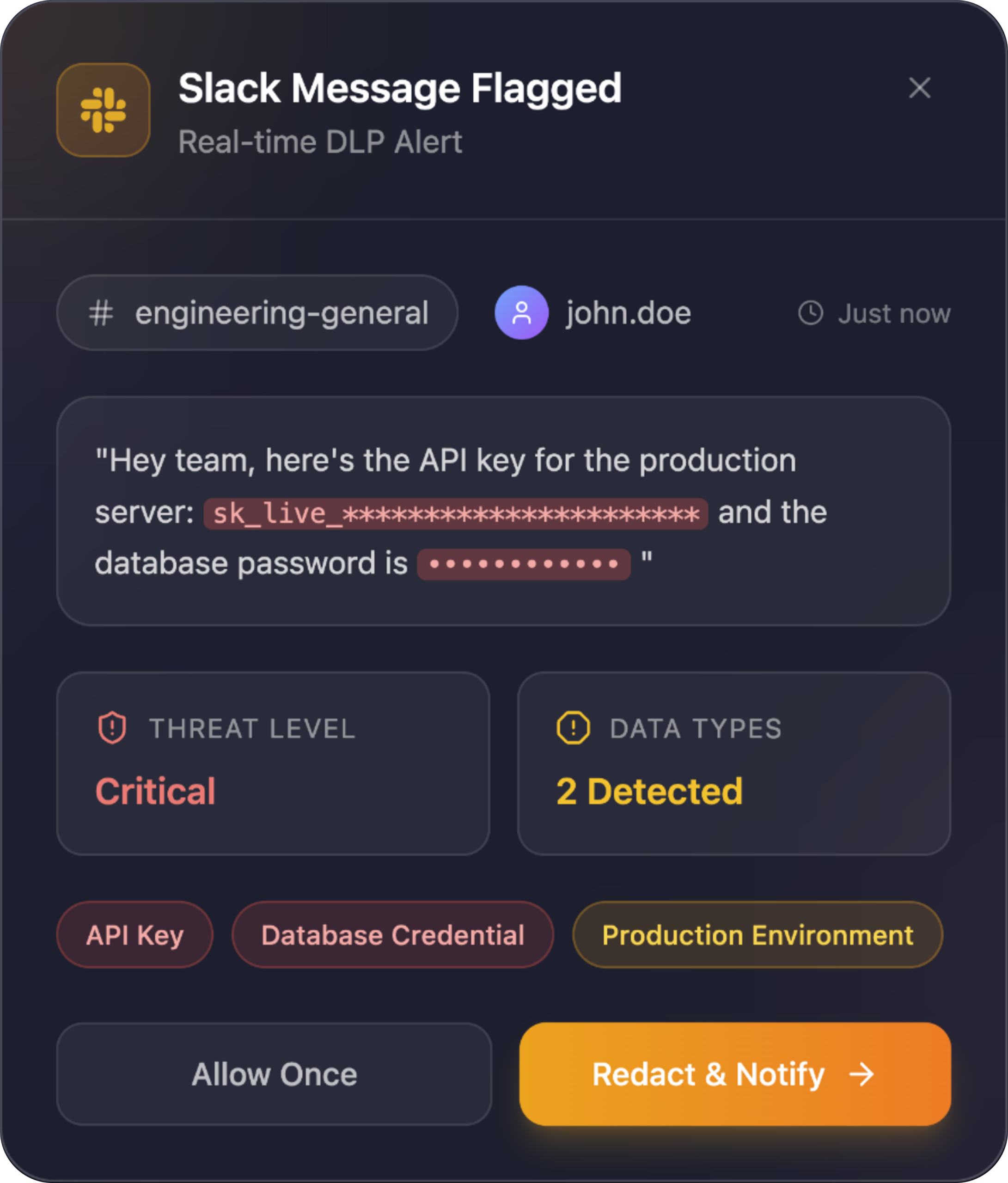

Sensitive Data Protection Inside Slack

Prevent accidental leaks in internal chats. Polygraf flags sensitive data in Slack messages instantly, classifies the severity, and lets users redact or notify the right team - without slowing communication.

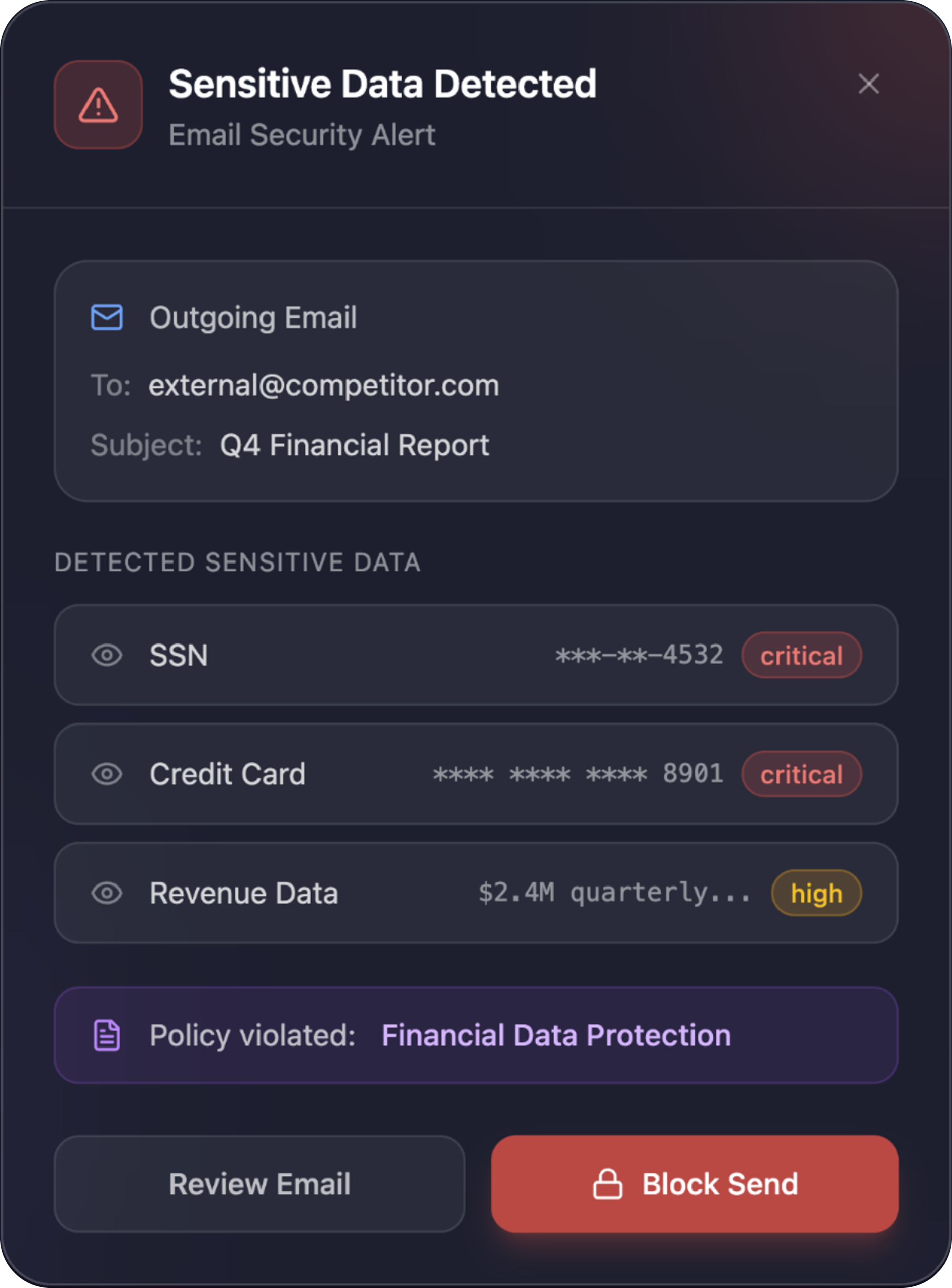

Real-Time Email Data Leak Prevention

Polygraf automatically scans every outgoing email for sensitive data - PII, financials, credentials, or regulated information - and blocks confidential data before it leaves your organization. Review, redact, or override with full policy context.

Security Standards You Can Trust.

ISO/IEC 27001:2022

Certified

SOC 2 Type II Certified

SOC 2 Type I Certified

IL2-IL6 Ready

NIST RMF–ready

HIPAA Compliant

FERPA Compliant

EU AI Act Compliant

PCI-DSS Compliant

CPRA Compliant

GDPR Compliant

Award-Winning AI Security Innovation.

USPTO

Patent: System and Method for Identifying and Determining a Content Source

2026

Cyber Defense Magazine Global InfoSec Awards

Most Innovative AI Usage Control

2026

2026 Cybersecurity Excellence Awards

AI Data Security & Governance

2026

22nd Annual Globee® Awards

22nd Annual Globee® Awards for Cybersecurity

2026

Road to Battlefield

Winner

2025

SXSW

Winner - Best in Show

2025

Product That Count

Top AI & Data Category Product

2025

Summerfest Tech

Winner - Best Fin/Insurtech 2025

2025

SXSW PITCH

Winner - Enterprise, Smart Data, FinTech & Future of Work

2025

SXSW

Top 10 Startup Of The Year

2024

dealroom.co

Top Analytics Startup to Watch for Globally

2024

Nvidia

NVIDIA Inception Program Member

2024

Product That Count

Top AI & Data Governance Product

2024

C2PA

Contributing Member

2024

Product That Count

Top AI Content Detection Tool

2024

Intel

Intel Alliance Program Member

2024

Austin Chamber

Top AI Startup

2023

Ready to Secure Your AI? Let’s Talk.

Data Privacy

Data Provenance

Developers

© 2026 Polygraf AI. All rights reserved.