Think about the last time someone on your team said, “I’ve been using this AI tool, it saves me hours.” You probably nodded. Maybe even encouraged it.

Now ask yourself: Does your security team know about it? Is there an audit trail? Does it have access to internal systems, customer data, or confidential conversations?

If you’re not sure, you’re not alone. And that uncertainty is exactly the problem.

The Quiet Rollout Nobody Authorized.

AI adoption inside enterprises didn’t wait for a governance memo. It happened organically, tool by tool, team by team – a sales rep using an AI notetaker, a developer using an AI code assistant, an HR manager summarizing performance reviews with a chatbot.

Each individually harmless. Collectively, a security blind spot the size of your entire organization.

According to Microsoft’s Cyber Pulse research, more than 80% of Fortune 500 companies are already running active AI agents – built with low-code and no-code tools, deployed across sales, finance, HR, and security operations. [1]

Most of them were not deployed by IT. Most of them have no centralized oversight. And most of them are operating right now, invisibly, inside your infrastructure.

This is shadow AI. And unlike shadow IT of the 2010s – where someone would sign up for Dropbox without telling anyone – shadow AI doesn’t just store your data. It reads it, summarizes it, acts on it, and sometimes sends it somewhere else.

Why This Is Different From Every Security Problem Before It.

Traditional security was designed around a fundamental assumption: the thing you need to protect against is a person.

Humans log in. Humans get credentials. Humans have roles, managers, and eventually, departure dates. The entire framework of identity and access management – MFA, role-based access controls, zero-trust architecture – was built for human identities at its core.

AI agents are not humans. They’re autonomous, non-human software entities that authenticate with credentials, access systems, query databases, take notes in meetings, draft proposals, and trigger real actions – all without a human in the loop.

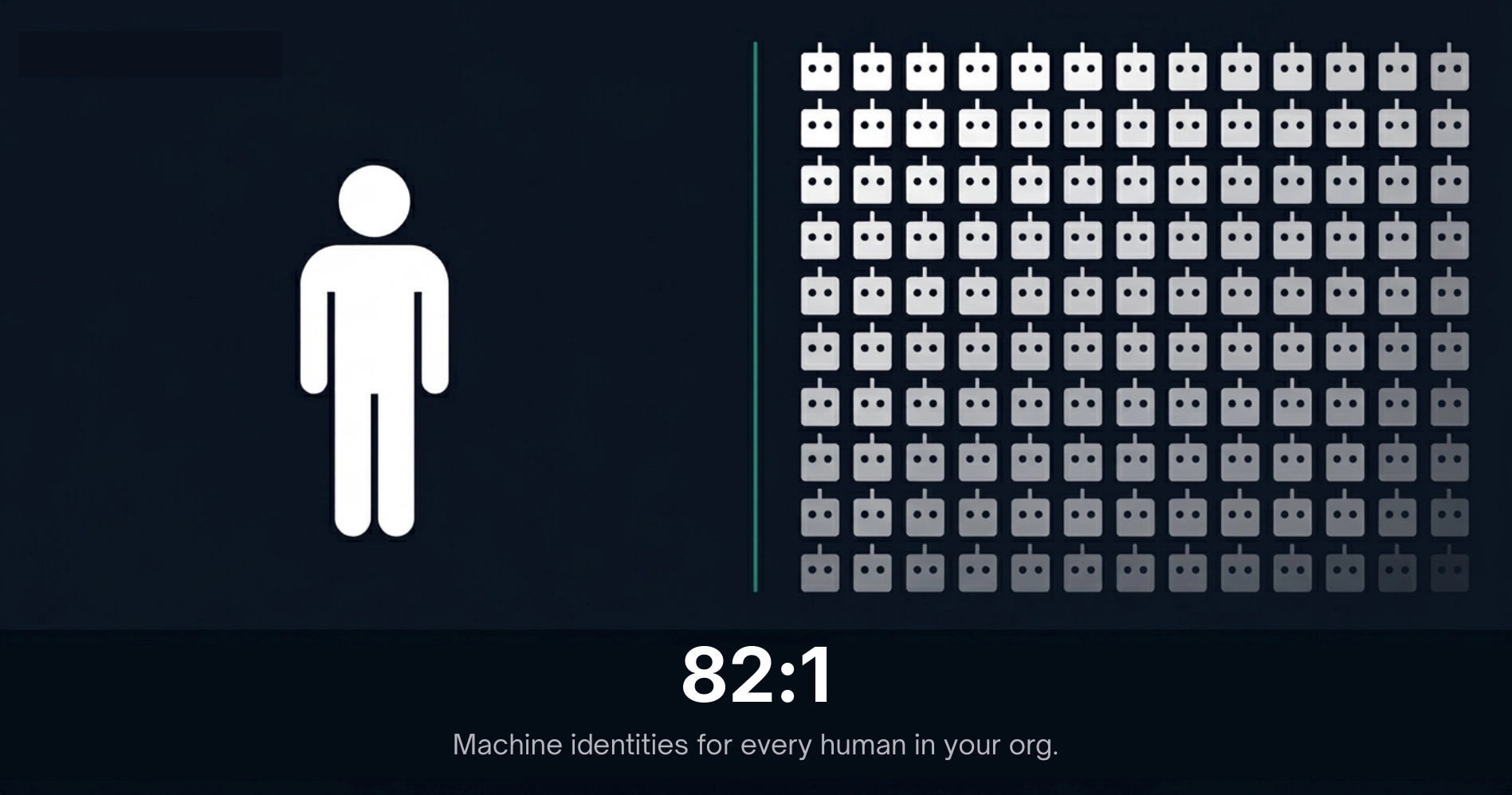

82 machine identities now exist for every human identity in the average enterprise. In some defense and finance sectors, that ratio exceeds 500:1. [2][3]

For every employee at your company, there are potentially dozens – sometimes hundreds – of non-human identities moving through your systems. AI agents. Automation scripts. API connections. Service accounts. And increasingly: AI models that were never formally approved, never security-reviewed, and never inventoried.

“You can’t protect what you can’t see.” That’s not a metaphor. It’s the operational reality most enterprises are living in right now.

What an AI Agent Actually Does in a Day.

Let’s make this concrete. Imagine a mid-sized defense contractor with 3,000 employees. Across those teams, here’s what might be running on any given day:

- An AI meeting assistant joins a call about a sensitive contract bid and transcribes everything – including the pricing strategy and partner names – to a cloud server in another country.

- A developer’s AI code assistant has read access to the internal repo and is sending code snippets to an external API to generate suggestions.

- A finance analyst is summarizing earnings projections in a commercial chatbot – not realizing that some of those inputs may be used to train the model.

- An HR tool is running performance review text through an AI that stores data indefinitely, with no clear data retention policy.

None of these people are bad actors. They’re trying to do their jobs well. But each of these scenarios represents real exposure – to competitor intelligence, to regulatory violation, to data exfiltration, to compliance failure.

And in regulated industries – defense contractors, healthcare providers, financial institutions, government agencies – these aren’t hypothetical risks. They’re potential CMMC violations, HIPAA breaches, ITAR infractions. The kind that cost contracts, trigger audits, and end careers.

The Three Gaps Nobody Talks About

When we talk to CISOs and security leaders, the same three gaps come up again and again:

- The visibility gap

Security teams don’t know which AI tools are running. There’s no inventory. No registry. No way to know what’s connecting to what. You can’t enforce policy on systems you don’t know exist. Only 34% of enterprises report having AI-specific security controls in place – which means roughly two-thirds are governing AI the same way they governed software a decade ago. [4]

- The behavior gap

Even when a tool is known and approved, there’s no mechanism to monitor what it actually does in context. An AI meeting assistant approved for general use doesn’t stop transcribing when the conversation turns to classified projects. The model doesn’t know what it doesn’t know.

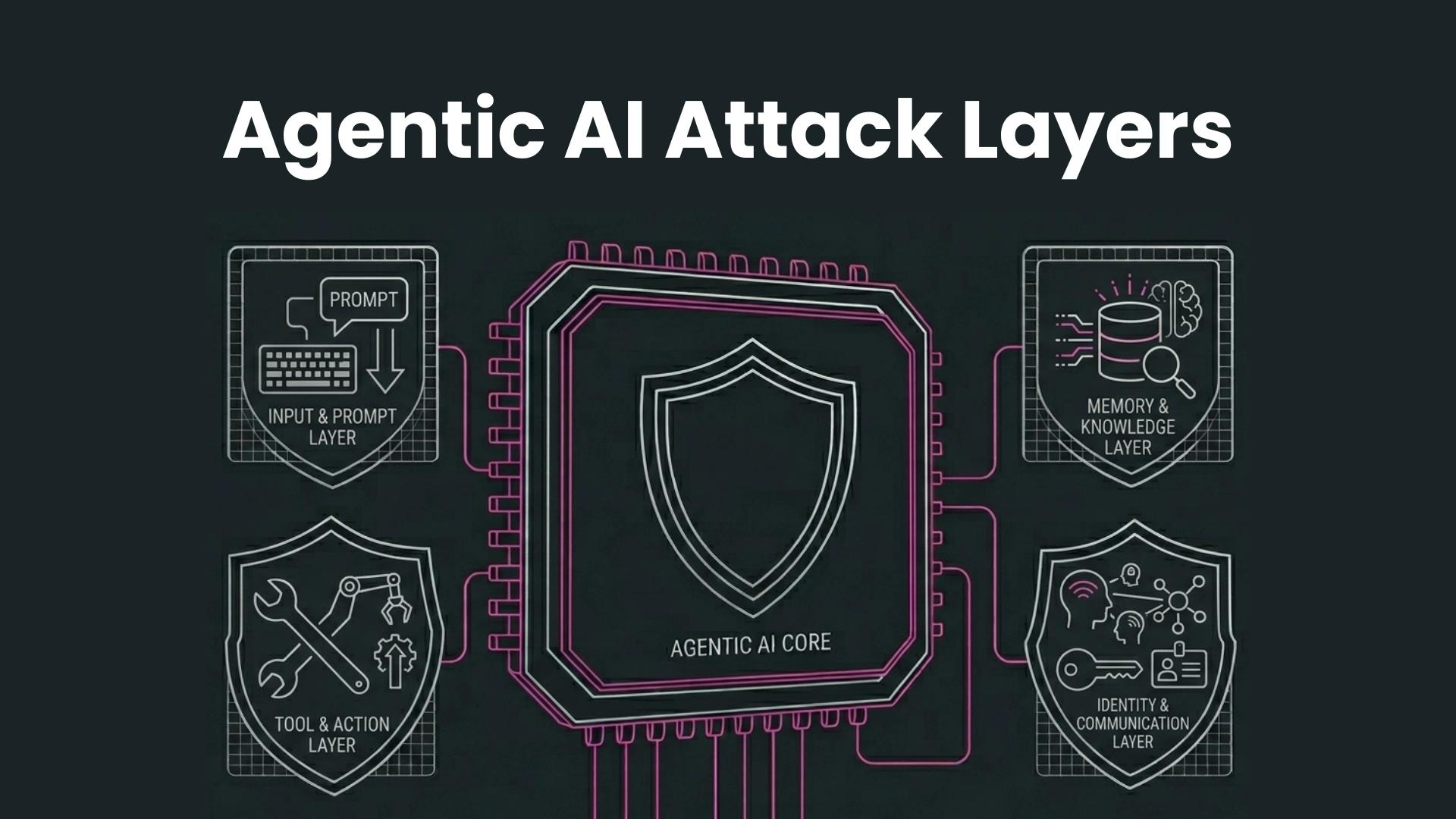

- The perimeter gap

Traditional security was built on controlling the network. But AI agents operate across cloud APIs, external services, and third-party platforms – entirely outside the network perimeter. Firewalls and DLP tools were not designed for a world where the threat vector is a language model processing your internal documents.

This Isn’t Solvable With More Policy. It Needs Infrastructure.

The instinct when facing a governance problem is to write better policies. Acceptable use policies. AI usage guidelines. Training sessions. And yes – those matter. But policy doesn’t enforce itself.

If an employee pastes a confidential memo into ChatGPT, the policy doesn’t stop it. The training didn’t stop it. Nothing stops it, because there is no technical control at the model layer.

What regulated enterprises actually need – especially those handling classified information, sensitive defense contracts, or protected health data – is a behavioral control layer that operates at the point where AI meets sensitive data. Not a warning. Not a guideline. A technical enforcement mechanism that understands context and acts on it.

The perimeter has moved. It’s no longer around your network. It’s around the behavior of every AI system that touches your data.

About Polygraf AI

Polygraf AI was built specifically for environments where the stakes are too high for general-purpose AI – government agencies and regulated enterprises. Our architecture is built on specialized Small Language Models, purpose-built for compliance-heavy, air-gapped environments. Think of it as a security plane for AI behavior. One that understands the difference between a routine internal update and a conversation containing controlled unclassified information. One that enforces policy at the point of contact, and not after the fact. And one that operates entirely within your perimeter, with no data transmitted externally without first being stripped of anything that shouldn’t leave.

Find Out Where And What Your Exposure Is.

We built a free AI Risk Calculator specifically for this. Enter how your organization currently uses AI – which tools, which teams, what data – and we’ll show you your real risk exposure across compliance, data leakage, and behavioral control gaps.

Try it now at: https://ai-risk-calculator.polygraf.ai

No sales calls required. Takes under 2 minutes.

Sources

[1] Microsoft Cyber Pulse Research – microsoft.com/en-us/security/security-insider/emerging-trends/cyber-pulse-ai-security-report

[2] CyberArk 2025 State of Machine Identity Security Report – https://www.bankinfosecurity.com/cyberark-rise-in-machine-identities-poses-new-risks-a-28967

[3] Entro Security – https://entro.security/blog/takeaways-nhi-secrets-risk-report/

[4] Cisco State of AI Security 2025 – cisco.com/c/en/us/products/security/state-of-ai-security.html

[5] FY2026 NDAA Section 1513 – crowell.com/en/insights/client-alerts/cmmc-for-ai-defense-policy-law-imposes-ai-security-framework-and-requirements-on-contractors

[6] GlobalData 2026 AI Forecast – verdict.co.uk/small-language-models-centre-stage-2026